Jan 13

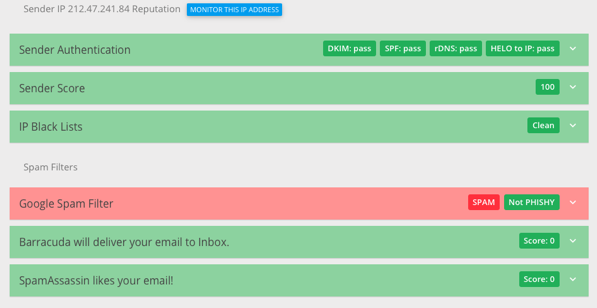

2020

Linux binaries as portable executables: a proposal for hypervisor-mediated Linux syscall reimplementation on macOS

The Linux kernel is well-known for its syscall ABI stability, which means that the semantics of the kernel system-call interface don't change between releases (apart from non-breaking bug fixes, and the addition of new features). This feature of Linux is so well established that several alternative implementations of the Linux system-call interface exist. Perhaps the most well-known one is FreeBSD's Linuxolator, through which FreeBSD provides a kernel module, linux.ko, which includes a Linux system-call interface implementation. Linux binaries running on FreeBSD are configured to use the Linux syscall interface, rather than the standard FreeBSD one, by changing a pointer in the (kernel level) process structure. On Windows, the Windows Subsystem for Linux also works by trapping system calls and translating them to appropriate Windows NT system calls.

This method, particularly FreeBSD's implementation, is a very integration-friendly way of running Linux binaries: the binary has immediate and direct access to the host file system and network, can start host-native binaries using fork and exec, and so on -- modulo bugs in the implementation, a Linux binary should be behaviourally indistinguishable from a native binary.

By contrast, the typical way to run Linux binaries on a Mac is to use a virtual machine -- use CPU virtualisation features to boot the Linux kernel in its own isolated space, and then run binaries as normal. This has the advantage of compatibility, because you're running real Linux, but it means that the Linux process is rather isolated from the rest of the machine: in particular, it will typically have its own filesystem and network interface, and it runs in its own "world", unable to launch host-native binaries or interact meaningfully with the host system in other ways (indeed, this isolation is a key feature of virtual machines). Even Docker for Mac uses a virtual machine, presumably so that it can be compatible with the many thousands of Dockerfiles and Docker images which assume that they are running on a complete Linux system.

As a POSIX-compatible system (and indeed as a 4.4BSD and FreeBSD derivative), macOS provides very similar core functionality to Linux, so it should be possible to provide a Linux system-call reimplementation which runs natively on macOS. Such a system would provide FreeBSD-like Linuxolator functionality for macOS.

Nonetheless, the virtual-machine approach has its advantages. The narrowness of the the interface provided by a virtual machine monitor means that neat tricks, such as task snapshotting, suspension, and network transparency become possible. The isolation provided by a VM makes it is easy to control things like file visibility, and memory and other resource usage. Finally, the host-specific portion can be relatively generic, relying on POSIX functionality and a host-specific hypervisor interface, rather than hooking directly into the host's system-call implementation, which would allow for portability.

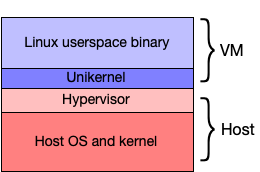

I therefore propose a hybrid implementation: a simple Linux-syscall-compatible unikernel, running in a hypervisor, which communicates with the host to perform network and file operations (using hypercalls). Ideally, the bulk of the syscall complexity can be kept to the unikernel layer. The unikernel would be designed to minimise hypercalls, to improve overall system speed: as just one (classic) example, the frequently-used gettimeofday syscall can be implemented entirely inside the virtual machine. The complete system would look like this:

Possible use case: Linux binaries as future-proofed, fixed-interface artefacts

Linus Torvalds is often quoted as saying "We do not break userspace" (though if you click that be warned that he expresses it in classic Linus style, i.e. by ripping into someone). As discussed above, this means that the semantics of the kernel ABI, as defined by the system-call interface, shouldn't change between versions.

This is an important rule for Linux, because the kernel developers do not co-ordinate kernel releases with user-level code -- even fairly low-level code such as the C library. This is in contrast with other systems, such as FreeBSD and macOS, where new kernel releases are tightly co-ordinated with associated user-space changes. However, Linux's ABI stability has other advantages beyond allowing the kernel developers not to get involved in user-space code: it makes it easy, for example, to implement alternative low-level interfaces to the kernel, as can be seen in the variety of libc implementations supporting Linux, or indeed to bypass a low-level interface and communicate directly with the kernel, as the Go programming language does when targeting Linux. And, on the other side, as discussed above, it means that alternative implementations of the Linux syscall interface do not require continuous changes as new versions of Linux are released.

There are several classes of program which are difficult to get running on macOS, relatively stable (in that they do not require continuous updates), and which benefit from tight system integration. Commercial tools provided in binary-only formats (such as those required for working with FPGAs or for designing electronic circuit boards) are one example. Another is compilers such as the GNU Compiler Collection: GCC is notoriously difficult to compile for macOS, and typically benefits from direct access to the host filesystem such that running it in a fully-fledged virtual machine is rather painful.

For these sorts of tools, the approach described above seems appropriate: the binary would ship with the Linux-specific libraries it requires, but would otherwise run as a native application, with direct access to the host's filesystem and direct ability both to run host-native binaries, and to have host-native binaries invoke the binary (for example, one could imagine a macOS-native build system which runs a Linux-native C compiler).

Possible problems

WSL2: Microsoft recently replaced WSL with WSL2. WSL2 abandons system-call emulation in favour of virtualisation: unlike the original WSL, WSL2 runs a complete Linux kernel in a virtual machine, behaving rather similarly to Docker on macOS. The stated reasons for the change are to improve filesystem performance and to provide better system-call compatibility. I should investigate whether these issues will also be a problem on macOS. It's worth nothing that Windows has significantly different filesystem semantics than Linux, and the kernel lacks efficient implementations for system calls like fork(): in other words, it may be that the NT kernel is dissimilar from Linux in ways that the macOS kernel is not.

Other work

Noah is a very similar project: it provides a hypervisor which traps Linux system calls and translates them to macOS native calls. It differs from this proposal in a couple of ways: firstly, the system-call emulation runs on the macOS side of the hypervisor, which limits the amount of optimisation that can be done before making hypercalls; more significantly, Noah downloads an entire Linux distribution and runs Linux binaries in a separate "Linux world", with relatively-little synergy with the rest of macOS, rather like a traditional virtual machine.